There is a class of production incident that resists conventional diagnosis. The system did exactly what it was supposed to do. The API was used correctly. The documentation, consulted afterward, turns out to have been accurate all along. And yet the outcome violated every expectation — a job that rewrites ten times more data than expected, a container that provides less isolation than its interface suggests, a join that degrades catastrophically at scale.

Beyond Leaky Abstractions

The standard diagnosis is “leaky abstraction,” a term Joel Spolsky popularized in 2002.1 All abstractions leak, he argued. The implementation details eventually surface. This is true, but it explains very little. It doesn’t tell you which abstractions leak, where they leak, or why the leaks are so consistently surprising. It names the phenomenon without explaining its structure.

I want to propose a more precise diagnosis — one that draws on semiotics2 rather than software engineering, and specifically on the work of Charles Sanders Peirce and Umberto Eco. The claim is this: many of the most persistent and surprising performance pathologies in modern data systems are semiotic failures — predictable, recurring, and invisible to the user the interface presupposes but does not fully equip.

A Fifty-Year-Old Promise

The contract embedded in these interfaces is not new. Edgar F. Codd articulated it in 1970, in the paper that founded the relational model.3 One of its central principles was data independence — the idea that queries should be written against a logical model, entirely insulated from the physical layout of the data. The user thinks in relations. The system handles storage, access paths, and execution strategy.

It was a radical and largely successful idea. The cost-based optimizer that Selinger and her colleagues built for IBM’s System R in 1979 was its practical realization:4 given a declarative SQL query, the system would enumerate possible physical execution plans and choose the cheapest one. The user never needed to know which plan was chosen.

But the System R team soon recognized a tension they could not quite resolve. If the physical layer is fully hidden, users cannot understand why queries are slow. They cannot diagnose anomalies. They cannot make informed decisions about schema design or query structure. The solution — EXPLAIN, and later EXPLAIN ANALYZE — was already an admission: pure logical transparency is not enough. At some point, the physical layer has to become visible.

Spark, Delta Lake, and container runtimes have re-enacted this same drama at a different scale and with a fundamentally different physical architecture. The promise is Codd’s promise, restated fifty years later. The tension is the same tension. What has changed is the distance between the logical interface and the physical reality — and that distance, in distributed systems, is vast.

The Interface as Sign

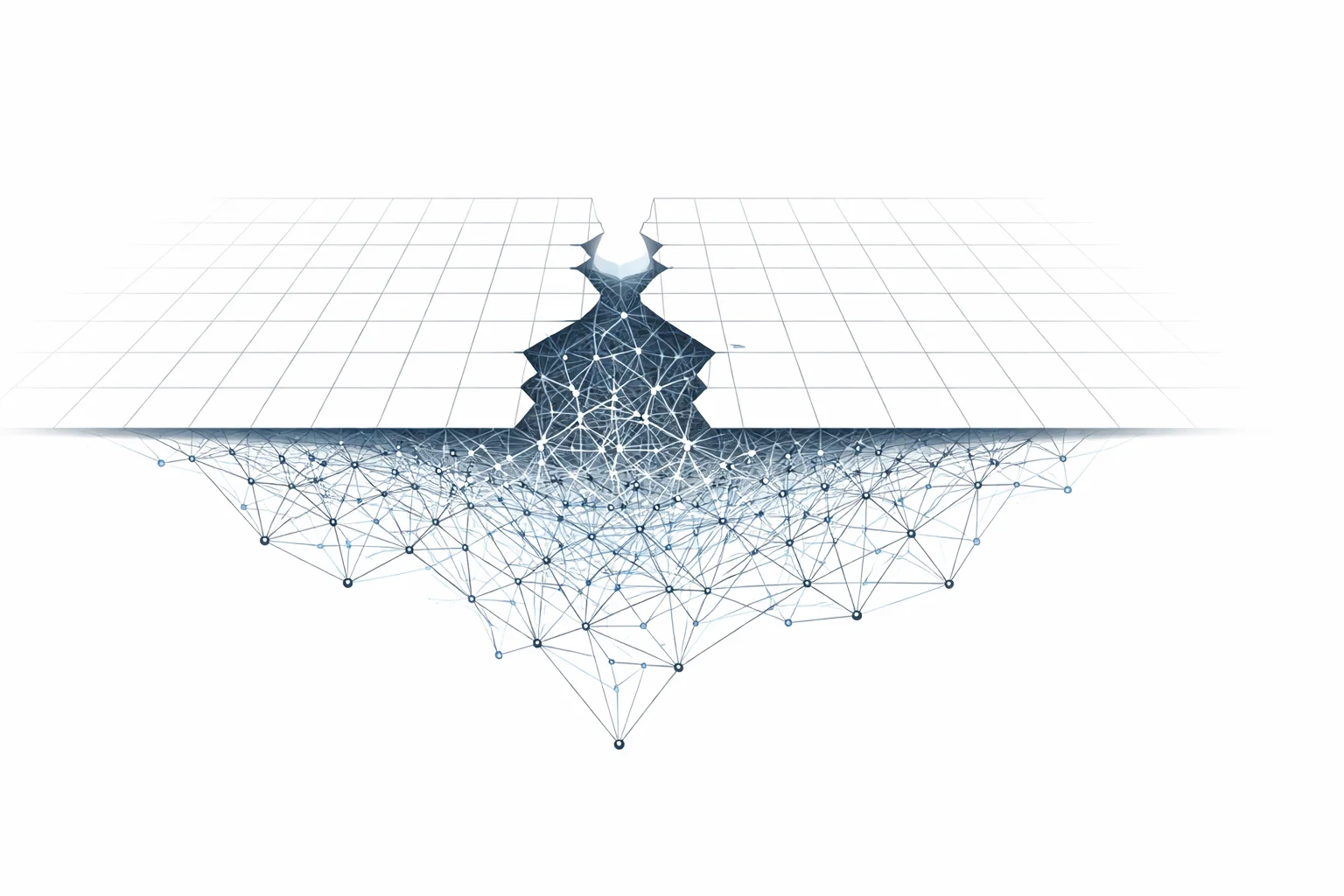

Consider df.join(other, on="key"). The syntax denotes a logical operation — a relational join with clear set-theoretic semantics. But what the syntax produces in the mind of an experienced engineer is something richer: an expectation of predictable cost, transparent execution, and uniform scaling. In Peirce’s triadic model of the sign — where every sign consists of a representamen (the sign-vehicle itself), an object (what the sign stands for), and an interpretant (the meaning the sign produces in its interpreter) — this accumulated expectation is the interpretant: not just the formal definition of the operation but the practical assumptions carried forward from prior systems. The practitioner brings to the interface not just its definition but the full weight of past experience with databases and dataframe APIs that behaved consistently.

The interpretant is legitimate — the natural product of a sign that activates certain encyclopedic competencies and leaves others dormant. The problem lies in the reader the interface presupposes: a reader whose competencies cover the ordinary case, and who is systematically unequipped for the cases that matter most.

The Model Reader

Eco’s concept of the Model Reader gives this observation its theoretical form.5 Every text, Eco argued, presupposes a model reader — a specific set of competencies, knowledge, and interpretive habits. The text inscribes a particular reader into its structure. The model reader is not a real person but a textual strategy: the set of competencies the text assumes in order to function as intended.

Software interfaces do the same thing. The designers of Spark’s DataFrame API presupposed a model user: someone who thinks in terms of logical transformations, who trusts the optimizer to make good decisions. That model user is well-served by the API in most cases.

But data systems at scale require a different reader — someone who understands file layout, partition statistics, shuffle cost, and optimizer visibility. That reader was not presupposed by the interface. They had to become that reader themselves, through failures and post-mortems and the gradual accumulation of knowledge that no documentation quite captures.

Encyclopedia, Dictionary, Breakdown

What determines the competencies the model reader carries? Eco called it the encyclopedia:6 the open, rhizomatic web of knowledge, assumptions, and inferential pathways through which a reader interprets any sign. Meaning, for Eco, is never self-contained — it lives in a network of connections that the reader activates. When that network is simplified, its connections pruned, its ambiguities resolved into univocal definitions, the encyclopedia degenerates into a dictionary.

An API is a dictionary. It takes the encyclopedic complexity of a system — its physical behaviors, its edge cases, its failure modes — and compresses it into a closed set of operations with precise, minimal definitions. But the model reader presupposed by that interface still carries an encyclopedia — whose rhizomatic branches the dictionary has anaesthetized but never eliminated.

The failures described here arise at those branches. Consider SELECT * FROM orders WHERE status = 'pending'. The dictionary entry is precise: filter rows by predicate, return all columns. But the reader’s encyclopedia fills in unstated assumptions — that an index exists, that the scan will be efficient, that “all columns” is cheap. Whether the query triggers an index lookup or a full table scan, whether it reads from cache or from disk, whether “all columns” means five fields or fifty including serialized blobs — none of this is encoded in the syntax. The representamen is identical across all these cases. The physical cost varies by orders of magnitude. This is not a documentation failure but a semiotic one: the interface presupposes a reader whose encyclopedia includes the ordinary case but not its pathological edges.

The dictionary/encyclopedia distinction explains the structure of the gap. But it does not yet name the moment when the gap becomes visible to the practitioner. Terry Winograd and Fernando Flores, drawing on Heidegger’s analysis of tools and transparency, gave that moment a precise name: breakdown.7 A system functions smoothly as long as the user and the system share an implicit interpretive horizon. When that horizon fractures, the user is forced to confront the machinery they had been trusting invisibly. The breakdown is not a system failure — it is a failure of the shared interpretive frame.

This is exactly what happens when a Spark job runs for six hours instead of twenty minutes, or when a MERGE rewrites a terabyte of data to update a thousand rows. The system has not failed. The interpretive frame has. The engineer must reconstruct, from query plans and executor logs, a physical model the interface was designed to hide.

The gap between the reader the interface assumes and the reader the system actually requires is a central source of performance pathology in modern data engineering.

Four Fracture Points

Each of the following cases shares a structure: an interface that activates the wrong expectation, a physical reality that diverges from it, and a failure mode that becomes predictable once the divergence is named.

Spark and the compiler you do not see

The phrase “Spark is lazy” is the dictionary entry. The encyclopedic reading it activates is: execution is deferred, but the cost of execution is proportional to what you wrote. In reality, Spark functions as a dataflow compiler. Between your code and physical execution sits Catalyst, which rewrites and optimizes the logical plan in ways that are invisible to the caller. The model reader presupposed by “lazy evaluation” is someone who expects deferred but proportional execution. That reader is not wrong — but they are unequipped for the moment when the optimizer’s decisions depend on information that may or may not be available at plan time.8

Delta Lake MERGE and the upsert that rewrites everything

MERGE INTO looks like a row-level operation. The dictionary entry says: match rows, update or insert as appropriate. The encyclopedic reading is: only affected rows are touched. Partition pruning and data skipping can narrow the candidate set substantially, but the real constraint is that the storage layer operates at file granularity: once a file contains a modified row, the entire file must be rewritten. The result is that MERGE often rewrites far more data than the logical operation requires. The representamen evokes surgical precision. The object is a sledgehammer.9

The skew that statistics cannot see

The standard model of data skew — a small number of keys that appear disproportionately often — is encoded in every explanation of the problem and in the catalog statistics that optimizers use to detect it. But skew can also arise from keys that appear exactly once but carry payloads of radically different sizes — payload skew, as opposed to key frequency skew. This form of skew is invisible to cardinality statistics. No interface signals the difference, and no optimizer fully compensates for it — adaptive query execution can detect oversized partitions after the shuffle, but the interface provides no signal in advance, and the underlying statistics remain blind to the cause.

This case has the same structure as the others, but displaced one level. The dictionary here is not the operation itself but the statistics that describe the data — cardinality counts, partition sizes, histogram buckets. That dictionary presents itself as a complete account of distribution. It has anaesthetized the encyclopedic branch that matters most: payload variance. The reader whose encyclopedia includes only the dictionary’s entries sees sufficient information where there is a critical gap. Eco defined semiotics as the discipline that studies “everything which can be used in order to lie.”10 The statistics do not merely omit — they signify completeness where completeness does not hold. In this precise sense, the dictionary lies.11

Containers and the isolation that is not there

“Container” is the most semiotic term in modern infrastructure. The word activates a powerful interpretant of strong isolation — reinforced not just by the metaphor (the shipping container, the sealed box) but by the operational interface: docker run produces what looks like a separate machine, with its own filesystem, process tree, and network stack. The physical reality is simpler: Linux containers share a kernel. They are namespace and cgroup boundaries, not trust boundaries. A container escape exploits a kernel vulnerability that exists precisely because the isolation the interface signifies is not the isolation the kernel provides. The interface presupposes a model reader who is building a deployment pipeline. It does not presuppose the model reader who is running untrusted code. This is not a failure of containers — it is a semiotic mismatch between the metaphor the interface evokes and the guarantees the kernel actually provides.12

Why Interfaces Are Designed This Way

The logical transparency that Spark, Delta, and container runtimes offer is genuinely valuable — the same value Codd identified in 1970. Data independence allows practitioners to reason about complex systems without holding the entire physical implementation in their heads. The model reader these interfaces presuppose is not wrong; it is simply incomplete. And that incompleteness concentrates at the boundaries of the ordinary case: at scale, under skew, in adversarial environments. The interface is most misleading precisely where the stakes are highest.

In classical relational systems, the logical-physical distance was relatively contained — a handful of join algorithms, a single node, stable statistics — and failure modes tended to be gradual. In modern distributed systems, that distance is vastly larger: between a logical operation and its execution sit optimizers, adaptive planners, distributed schedulers, shuffle mechanisms, storage layouts, and incomplete statistics. The abstraction does not degrade smoothly. It holds — until it doesn’t. A Spark job runs reliably for months, then becomes ten times slower because the data distribution crossed a threshold the planner had not anticipated. The competencies required to diagnose this — the conditions under which AQE intervenes, the difference between logical and physical partitioning, the cost structure of shuffles — are not encoded in any interface. They are learned through production incidents and the slow reading of query plans.

The semiotic reading does not make these problems easier to fix. An experienced systems engineer will recognize every failure mode described here without needing Peirce to explain them.

What the framework provides is not diagnosis but taxonomy: a structural account of why the same class of failure recurs across systems that share nothing except the decision to separate the logical interface from the physical reality. That is a modest claim, and I think a correct one.

Postscript

I studied semiotics under Umberto Eco at the University of Bologna. I work on distributed data systems. The connection between these two bodies of work did not occur to me for a long time. When it did, it seemed obvious — not as a metaphor, but as a structural analogy. The problems share the same architecture. The vocabulary is different.

If you have found the semiotic framing useful, the primary texts are Eco’s A Theory of Semiotics (1976) and The Role of the Reader (1979). Peirce’s collected papers are harder going, but the triadic sign model is accessible through secondary literature. Winograd and Flores’s Understanding Computers and Cognition (1986) is the most direct bridge between this tradition and software systems — it remains underread in engineering circles and does not deserve to be.

For the historical background on data independence and the optimizer, Codd’s 1970 paper is short and still worth reading directly. Selinger et al.’s 1979 paper on System R is where the logical/physical tension becomes fully visible as an engineering problem. The connection to compiler-style optimization becomes particularly clear in these database papers, where equivalent logical expressions are explicitly mapped to alternative physical execution plans.

-

Joel Spolsky, “The Law of Leaky Abstractions,” 2002. ↩︎

-

“Leaky abstraction” names the eventual surfacing of hidden implementation details. The semiotic account proposed here is narrower: it explains why certain interfaces systematically produce the wrong practical expectations even when their formal semantics are correct. ↩︎

-

Edgar F. Codd, “A Relational Model of Data for Large Shared Data Banks,” Communications of the ACM 13, no. 6 (1970). ↩︎

-

Patricia Selinger et al., “Access Path Selection in a Relational Database Management System,” Proceedings of the 1979 ACM SIGMOD International Conference on Management of Data. ↩︎

-

Umberto Eco, The Role of the Reader: Explorations in the Semiotics of Texts (Bloomington: Indiana University Press, 1979). ↩︎

-

Umberto Eco, A Theory of Semiotics (Bloomington: Indiana University Press, 1976). The dictionary/encyclopedia distinction is developed further in Semiotics and the Philosophy of Language (1984). ↩︎

-

Terry Winograd and Fernando Flores, Understanding Computers and Cognition: A New Foundation for Design (Norwood, NJ: Ablex, 1986). The concept of breakdown draws on Heidegger’s distinction between ready-to-hand (Zuhandenheit) and present-at-hand (Vorhandenheit) in Being and Time. ↩︎

-

For the full technical analysis, see Spark Is Not Just Lazy. Spark Compiles Dataflow. ↩︎

-

See Delta Lake MERGE Is Not a Simple Upsert. What Actually Happens at Scale. ↩︎

-

Umberto Eco, A Theory of Semiotics (1976), §0.1.3: “Semiotics is in principle the discipline studying everything which can be used in order to lie. If something cannot be used to tell a lie, conversely it cannot be used to tell the truth: it cannot in fact be used ’to tell’ at all.” ↩︎

-

See Fixing Skewed Nested Joins in Spark with Asymmetric Salting ↩︎